Human Segmentation Simulation on Isaac Sim

A simulation in NVIDIA Isaac Sim where a wheeled robot equipped with a camera detects humans in real time using semantic segmentation.

In this project, I developed a Software-in-the-Loop (SIL) testing simulation using NVIDIA Isaac Sim.

I used an NVIDIA Nova Carter robot as the base platform and mounted a camera sensor on it. The robot’s sensors and motors are fully interfaced with ROS2 using rosbridge.

For the segmentation model, I trained a PeopleSemSegNet model using NVIDIA U-Net.

Building Environment

For the environment, I used warehouse.usd. I added assets like forklifts, people, and other obstacles using the Isaac Assets tab.

Setting up Robot

You can directly import the Nova Carter ROS-equipped robot USD for this project. However, I used the base Nova Carter USD and designed my own action graph, rosbridge, and sensor connections to better understand how robot models function in Isaac Sim.

I used a differential controller action graph to implement control logic for the Nova Carter, which can also be controlled via teleop in ROS2.

Differential Drive Graph

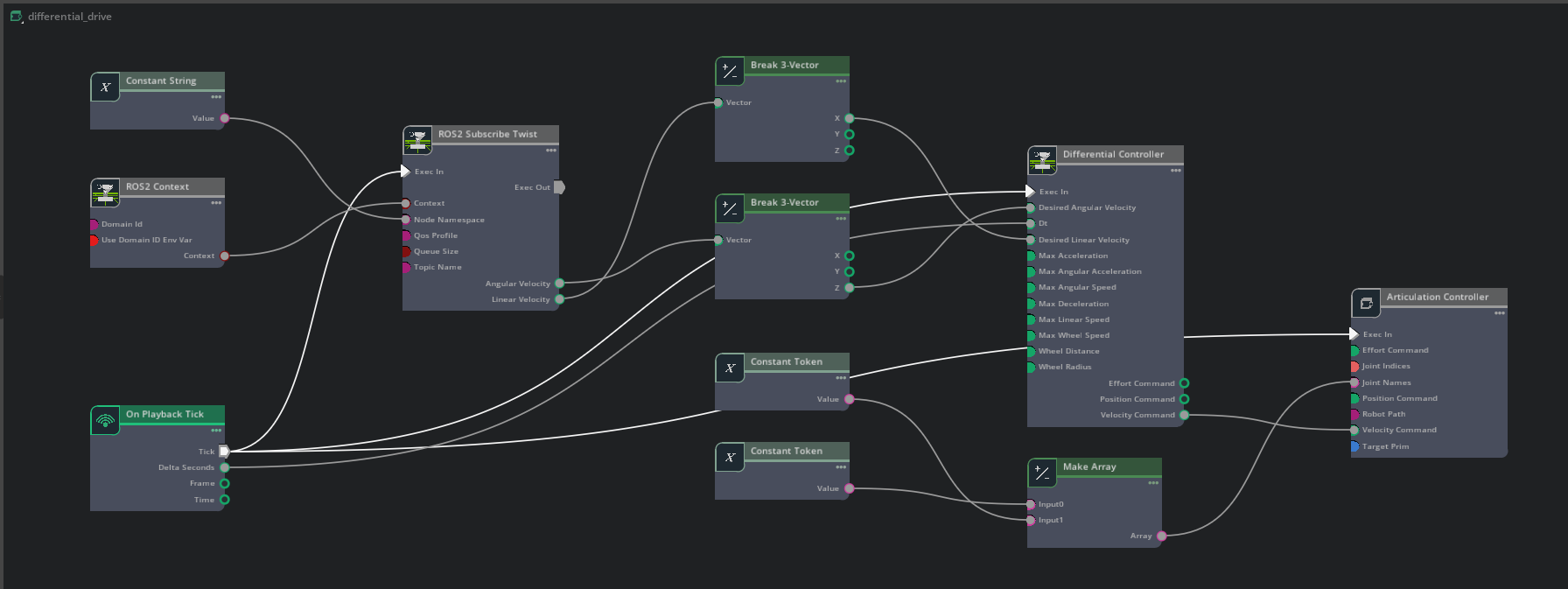

For the differential drive, I used the following nodes:

- ROS2 Context: Initializes the ROS2 environment within Isaac Sim.

- On Playback Tick: Triggers execution on each simulation step.

- ROS2 Subscribe Twist: Subscribes to

/cmd_velfor velocity commands. - Scale To/From Stage Units: Converts velocity units between ROS and USD stage.

- Two Break 3-Vector nodes: Breaks the Twist message into individual vector components.

- Make Array: Combines vector components into an array for control logic.

- Differential Controller: Converts linear/angular velocity into wheel speeds.

- Articulation Controller: Applies control values to wheel joints.

- Two Constant Token nodes: Specifies wheel joint paths for left and right wheels.

Below is a diagram of how these nodes are connected.

People Segmentation Model

For semantic segmentation, I used Isaac Sim's Synthetic Data Generation tool to create random environments with varied human positions and obstacles. This tool also captures pixel-wise annotations and bounding boxes which are essential for training segmentation models.

I used NVIDIA's PeopleSemSegNet model as a base. After deploying it on the robot, I finetuned it further using 4,000 synthetically generated environments. This experiment was meant to experiment with training model with the help of Isaac Sim.

You can skip the training step and directly use the pretrained PeopleSemSegNet model.

The model was trained over 200 epochs with a batch size of 16, taking approximately 5 hours on an RTX 3080 GPU (8GB VRAM).

As expected, there was only a small deviation in mIoU between my trained model and the pretrained base model.

Conclusion and Closing notes

I was able to deploy an end-to-end environment for Software-in-the-Loop (SIL) testing in Isaac Sim.

I learned how to deploy a ROS2-equipped robot in Isaac Sim and control it via teleop and differential control.

I also learned to read sensor data and generate synthetic datasets for training deep learning models.

Resources

-

Isaac ROS U-NET Quickstart Guide

— Full setup instructions for installing and running

isaac_ros_unetincluding binary install and building from source. - NVIDIA DLI Course: Developing Robots with Software-in-the-Loop (SIL) using Isaac Sim & ROS 2 — Learn fundamentals of SIL robotics development in Isaac Sim with hands-on labs.