Deep Racer

Inspired by AWS Deepracer, developed and actor critic modeled car that could compete with a PID controller's lap times and safety.

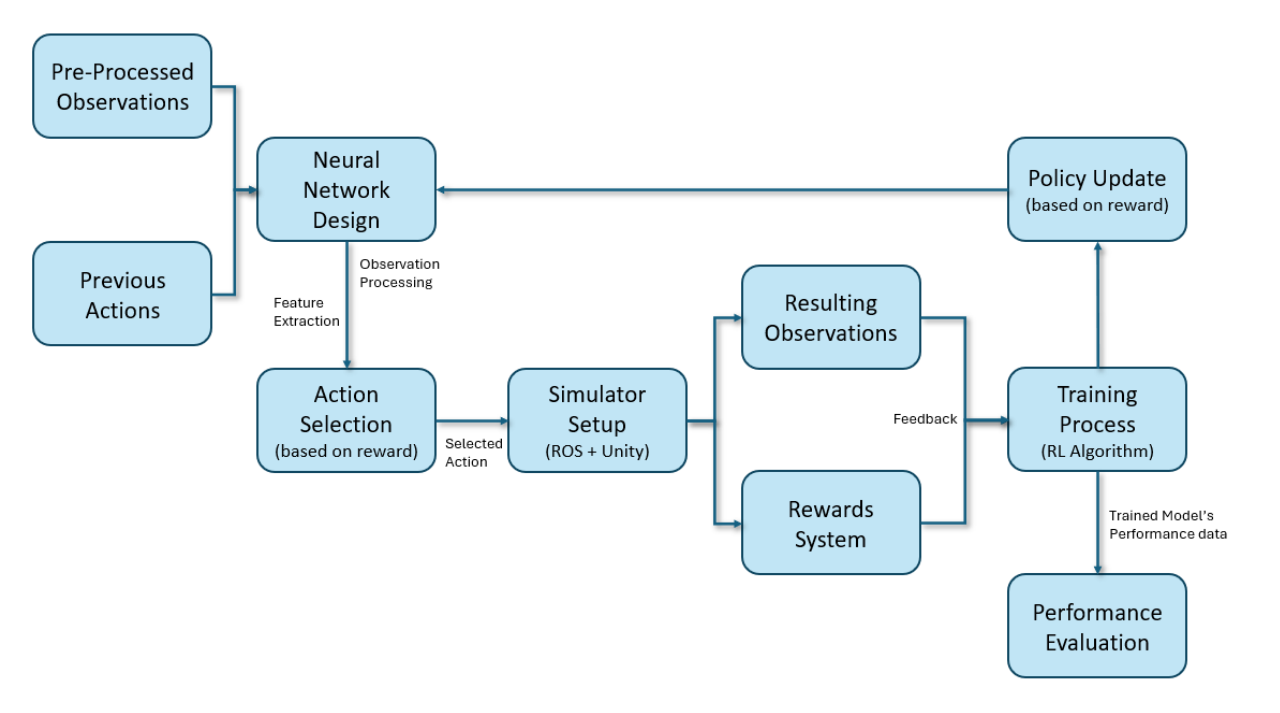

This project explores the application of reinforcement learning (RL) for optimizing vehicle speed in autonomous navigation. Using a Unity-based simulator integrated with ROS, we developed a system that balances speed and safety while improving lap times.

The research focuses on integrating Deep Q-Network (DQN) and CNN-based neural networks to dynamically optimize speed in real-time, enabling more efficient and adaptable autonomous navigation.

Prerequisites

Ensure you have the following dependencies installed before running this project:

- ROS Noetic

- Unity-based F1/10th Simulator

- Python 3.8+

- Ubuntu 20.04

Installation guides:

The simulator includes built-in sensors for LiDAR, IMU, and odometry.

ROS provides a powerful visualization tool called Rviz, which allows real-time visualization and interaction with standard ROS data types, such as point clouds and markers.

Autonomous Vehicle Simulation

The simulator provides a realistic testing environment with dynamic lighting, race tracks, and real-time sensor data.

Technology Stack

- Simulation: Unity-based Audubon-Unity Simulator

- Framework: ROS (Robot Operating System)

- Deep Learning: Deep Q-Network (DQN), CNNs

- Sensors: LiDAR, Odometry

- Control: PID Controller

Reinforcement Learning Model

We employed a Deep Q-Network (DQN) to train the vehicle to adjust its speed dynamically based on sensor input.

- Observation Space: LiDAR scan data (1080 points) and previous actions.

- Action Space: Discretized speed control (35 actions).

- Reward System: Balanced incentives for speed efficiency and crash avoidance.

Neural Network Architecture

To process sensor inputs effectively, we used a combination of fully connected layers and CNN-based architectures for improved feature extraction.

Training Process

The training process involved curriculum learning:

- Phase 1: Maximizing speed and optimizing lap times.

- Phase 2: Introducing safety measures and collision avoidance.

- Early Stopping: Prevented overfitting while balancing speed vs. safety.

Results and Evaluation

Our RL model achieved nearly competitive lap times compared to a manually optimized PID controller.

- Lap Completion Time: RL model - 36 secs vs. PID control - 32 secs.

- Performance: Adaptive braking and turn anticipation improved driving efficiency.

Challenges and Solutions

- Implemented CNNs for better feature extraction.

- Introduced reward shaping to prevent reckless speed prioritization.

- Trained the RL model with real-time simulation updates for improved decision-making.

Limitations and Future Work

The current model requires significant training time (~23 hours). Future work will focus on:

- Exploring advanced RL techniques such as Actor-Critic (A2C) and DDPG.

- Enhancing reward mechanisms for better speed-safety trade-offs.

- Expanding the dataset with more diverse training environments.

Conclusion

This project demonstrated the feasibility of using Deep Q-Networks for speed optimization in autonomous vehicles. The results highlight the potential of RL in improving navigation efficiency, making it a promising approach for real-world deployment in self-driving applications.

Project Architecture

RL-based Speed Optimization in Action